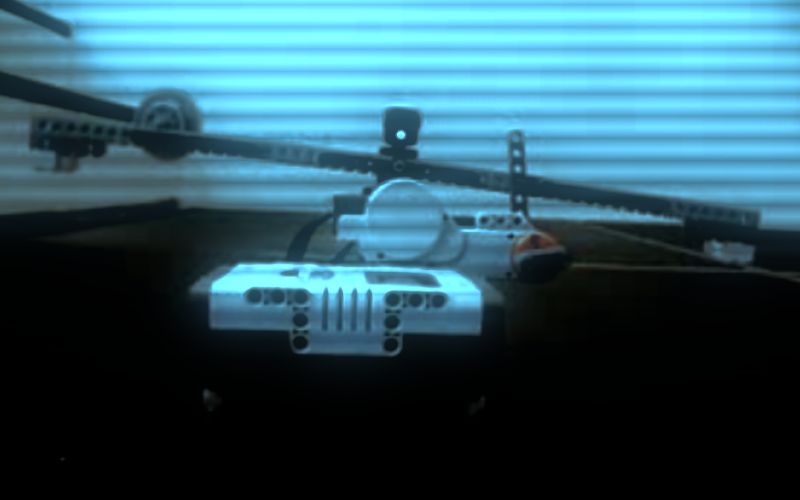

I was interested in using a camera and display as a filter of sorts for a 2a vehicle to see how it would effect the behavior. The results were pretty interesting and fun to play with, I would encourage you to try it out. Any device with a camera and screen can work as the “eye” for this robot. The concept is based around Dr. Braitenberg work in Synthetic Psychology, more specifically the Braitenberg 2a vehicle which uses two light sensors, and two motors. Light sensor outputs are crossed so that the Right Light Sensor is connected to the Left Motor, and Right Motor is connected to the Left Light Sensor.

1) In-Camera Mode has a slight delay in updating the screen, depending on the speed of the robot the delay can act as a smoothing effect on the behavior of the robot.

2) iPhone has a fixed Fixed FOV (Field of View), using a digital cam one could change the behavior by zooming in or out.

3) I placed 2 layers of translucent tape over each light sensor to defuse the light from the pixels that are getting sampled on the left and right side of the screen. This will reduce the overall sensitivity of the robot but will further smooth out the motion. Will post more on this as time allows.